UniFi Evolution: Deep Sentinel and Ubiquiti Join Forces for live monitoring

For years, the Ubiquiti community has shared a common "whisper" in the forums: “The hardware is incredible, but what happens if I’m not looking at my phone?” Until now, UniFi Protect has been a world-class passive system. It records the crime in stunning 4K, sends a notification to your pocket, and leaves the rest to you. But as of February 2026, the game has fundamentally changed. With the landmark integration between Ubiquiti and Deep Sentinel, the "passive era" is officially over.

By becoming the first third-party partner to gain early access to Ubiquiti’s new Official Protect API, Deep Sentinel has achieved what was once thought impossible: bringing live, human-verified "Flash Response" to your existing UniFi cameras. We aren't just talking about smarter AI filters; we’re talking about a live guard in a command center halfway across the country seeing a threat on your G6 Turret and shouting, "Get off the property," before your front door is even touched.

In this deep dive, we explore how this "Zero Rip-and-Replace" solution turns your local NVR into a pro-grade security fortress, which specific G-Series cameras support full two-way intervention, and why this API handshake is the most important update to the UniFi ecosystem in a decade.

For years, the Ubiquiti UniFi Protect ecosystem has been the "gold standard" for prosumers who want high-quality hardware and local storage without monthly fees. However, its one Achilles' heel has always been passive monitoring. If an intruder stepped onto your property at 3:00 AM, UniFi would dutifully record it and send a notification to your phone—but if you were asleep, the crime happened anyway.

That changed in February 2026 with the official partnership between Deep Sentinel and Ubiquiti. By leveraging Ubiquiti's newly released Official Protect API, Deep Sentinel has transformed UniFi cameras from passive recorders into active, human-monitored guardians.

The Tech: Why This Isn't Just Another "Integration"

In the past, connecting UniFi to third-party services required unstable RTSP streams or complex workarounds. This new integration is built on an Official API handshake, which offers three major technical advantages:

Edge-AI Efficiency: Deep Sentinel doesn't need to "see" your footage 24/7. It remains idle until your UniFi camera’s onboard AI detects a person. Only then is the metadata and a high-priority stream sent to the Deep Sentinel Live Center.

Low Latency Intervention: Because it uses native API hooks, the lag between a person appearing on your lawn and a live guard speaking through your camera is under 10 seconds.

Encrypted Tunneling: No open ports or exposed firewall rules are required. The connection is handled via a secure API key, keeping your local network private.

Hardware Breakdown: Which Cameras Stop Crime?

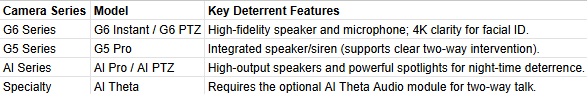

While almost any UniFi camera can be monitored, the "Live Guard" experience is most effective when the guard can speak back. Below are the cameras that support full two-way talk and active deterrents:

Pro Tip: If you have older cameras like the G5 Flex or G5 Bullet, they have microphones (so the guard can hear the intruder) but no speakers. Deep Sentinel can still monitor these, but the guard will only be able to dispatch police, not verbally intervene.Setup Guide: Linking UniFi to Deep Sentinel

Setting up the integration takes less than 15 minutes. Ensure your UniFi Protect application is updated to v6.2.x or higher.

Phase 1: Generate the API Key

Log in to your UniFi Console (via

unifi.ui.comor your local IP).Navigate to Settings > Control Plane.

Click on the Integrations tab.

Under Your API Keys, select Create New Key.

Name it "Deep Sentinel" and copy the key immediately (you won't be able to see it again).

Phase 2: Connect to Deep Sentinel

Open the Deep Sentinel App on your mobile device.

Go to Settings > Device Manager.

Select Add Device to Hub > UniFi Protect.

Enter your NVR's IP address (or select the cloud-linked console) and paste the API Key you generated.

Follow the prompts to select which cameras you want the guards to monitor and set your "Protection Zones."

The Verdict for Bloggers & Installers

This partnership effectively bridges the gap between DIY Hardware and Enterprise Security. For businesses or high-end residential estates already invested in Ubiquiti, this is the most significant upgrade to the platform since its inception. You keep your local 4K recordings, but you gain a "human in the loop" to stop the crime before it happens.

Is Your Security System Watching or Reasoning? A Look at Ambient.ai’s Pulsar Release

Is your security system just watching, or is it actually reasoning? We break down Ambient.ai’s new Pulsar release to explore how Vision Language Models (VLM) are shifting physical security from passive recording to active intelligence—cutting through the noise to deliver context, not just pixels.

Estimated Read Time: 5 Minutes

Intro

In the physical security world, "noise" is the enemy. Whether it’s a motion sensor triggered by a swaying tree or a legacy analytics system flagging every shadow as a threat, false alarms are the quickest way to burn out a Global Security Operations Center (GSOC).

At CG Security Consulting, we constantly preach the value of cutting through the noise. We believe technology should be a force multiplier, not a distraction. That is why we are paying close attention to Ambient.ai’s latest release: Pulsar.

This isn’t just another camera upgrade. It is a shift from systems that simply see pixels to systems that understand context. Here is our breakdown of what Pulsar is, why it matters, and how it teeters on the edge of a new era for physical security.

The Core Shift: From Vision to "Vision-Language"

To understand why Pulsar is different, you have to look under the hood—but just briefly.

Most traditional video analytics rely on "detectors." They are trained to recognize specific shapes: a car, a person, a bag. If a person stands near a door, the detector says, "Person detected." It doesn't know if that person is a delivery driver waiting to be buzzed in or a threat actor attempting to tailgate.

Pulsar utilizes a Vision Language Model (VLM). This is the same class of AI technology that powers tools like ChatGPT, but applied to video. Instead of just drawing a box around a person, the system can "describe" what it is seeing in real-time. It combines visual perception with semantic understanding.

In non-technical terms: Your camera system stops acting like a motion sensor and starts acting like a rookie security guard who never blinks. It can reason that "a person loitering by the back door at 2 AM" is different from "a person taking a smoke break at 2 PM," even if the pixels look similar.

Key Features That Caught Our Eye

Ambient.ai’s launch highlighted several features that directly address the efficiency problems we see in our client’s security operations.

1. Semantic Search (The "Google" for Your Video Footage)

If you have ever tried to find a specific incident in hours of footage, you know the pain. Usually, you are scrubbing through timelines looking for movement. With Pulsar, operators can use natural language queries. You can type, "Show me a person in a red shirt carrying a backpack near the loading dock," and the system retrieves those specific instances. This drastically reduces investigation time from hours to minutes, directly improving the ROI of your security labor.

2. Agentic Video Walls

The traditional video wall—a grid of 50 live streams that human eyes eventually glaze over—is dead. Pulsar introduces "Agentic" walls that change dynamically. The AI highlights streams where relevant activity is happening right now. It prioritizes the feed that needs attention, effectively telling the operator, "Look here, not there."

3. Contextual Intent Recognition

This is the "technical" feature with the biggest "non-technical" impact. Pulsar is designed to understand intent. It attempts to distinguish between harmless behavior (someone holding a door for a colleague) and risky behavior (unauthorized tailgating). By processing these nuances at the edge, it aims to eliminate the nuisance alarms that plague most SOCs.

Why This Matters for Your Organization

You don't need to be a tech giant to benefit from this kind of intelligence. Whether you are a municipality managing public safety or a manufacturing plant protecting intellectual property, the implications are clear:

Reduced Fatigue: When your operators aren't chasing false alarms, they are sharper for real threats.

Faster Forensics: Liability claims and investigations can be resolved in minutes, not days.

Proactive vs. Reactive: The shift to "Agentic" security means the system is working with you, alerting you to anomalies you didn't even know to look for.

The CG Security Consulting Take

We often warn our clients about "shiny object syndrome"—buying technology just because it's new. However, Ambient.ai’s Pulsar represents a fundamental shift in how we treat video data. It moves us away from passive recording toward active reasoning.

If your current security strategy feels like it involves more "reacting" than "preventing," it might be time to audit your technology stack.

Ready to see if your security infrastructure is ready for AI? At CG Security Consulting, we help you navigate these complex choices to find the solution that fits your budget and your risk profile. Contact us today for a consultation.

More info & tools to check out

Check out Ambient.ai’s Pulsar playground, where you can upload video clips and compare the video analysis to other top VLM models: Pulsar Playground.

The Monthly Phish Fry: October 2025

Intro

We’re back! And yes, we’re a little later than usual. Our apologies—you could blame our calendar management, or you could blame the digital apocalypse that took down half the internet. (We’re definitely blaming the digital apocalypse.)

Who’s to say, really?

In any case, the fryers are hot and we're ready to serve up this month's catch. It's a weird one, folks. We've got stories that blur the line between the digital and the physical, and others that are just plain bizarre. On the menu for this Monthly Phish Fry:

Cyber-physical tech: What happens when a hacker can literally unlock your front door?

AI Juries: We'll look at the disturbing trend of AI infiltrating the courtroom.

Pixnapping: The bizarre new ransom tactic you need to know about.

How Amazon Broke the Internet: And, of course, the main course. We’ll untangle the technical mess that led to the great outage... and why it will probably happen again.

Grab a fork and let's dig in!

One Security Bot Served Up Raw

Remember that "cyber-physical tech" we promised to fry up? Well, we're starting with a fresh catch called ARGUS, a new robotic security guard that’s being sold as the ultimate hybrid hunter. It's not just a camera on wheels; this bot roams your halls using AI to spot faces and weapons, while also sniffing your network traffic for things like port scans. The big sales pitch is that it can correlate a physical intruder with a digital attack in real-time.

Here's the fishy part: while it’s busy correlating two attack surfaces into one, the researchers themselves admit its accuracy plummets in poor lighting. More importantly, they note that "future work" is still needed to defend it from deepfakes, spoofing, and "adversarial compromise." In other words, we’re building a "security" robot that can't reliably see in the dark, can be fooled by a fake face, and hasn't yet been secured from being tampered with. It's the perfect cyber-physical storm: a "guard" that could be turned into the most sophisticated Trojan horse you’ve ever paid for.

Sounds like a fun game of tag, except the robot is "it" and you're fighting the hacker for the controller. Hey, maybe there’s an idea for your computer science program.

A Jury of… Artificial Peers?

Next up on the menu is that "AI Jury" we promised, and it’s even fishier than it sounds. A law school in North Carolina thought it was a good idea to run a mock trial, replacing a jury of peers with a panel of bots: ChatGPT, Grok, and Claude. The AIs were fed a real-time transcript to "deliberate," and the results were, predictably, a disaster.

A post-trial panel of actual humans was "intensely critical," pointing out that the bots couldn't read body language, lacked any human experience, and are famous for—you know—hallucinating facts. To make it even more absurd, one of the AI "jurors" was Grok, the same bot that once had a public meltdown and started calling itself "MechaHitler."

But the truly scary part isn't that the bots were bad; it's the warning from one professor that the tech industry's "instinct to repair" is the real danger. They won't just stop; they'll "fix" the problem by giving the bots video feeds and "backstories" until they have recursively "repaired" their way right into a real jury box.

As if the legal system wasn’t enough of a circus.

This Month's Crispiest Con: "Pixnapping"

"Pixnapping", a particularly greasy con served up fresh for Android users. This isn't your garden-variety phishing; it’s a patient, slow-cooked attack. A malicious app, running without any special permissions, uses a clever side-channel trick to essentially ask your phone's graphics processor what it's rendering. It then "naps" the data from your screen, one pixel at a time. It may be slow, but it's fast enough to read the 2FA codes right out of your Google Authenticator or peek at your bank app. It's a resurrected vulnerability (CVE-2025-48561) that's already found a workaround for Google's first patch, proving that even old, "fried" attacks can be served up again while they're still hot.

To make matters worse, this is effective against the latest operating system, Android 16.

Full paper: here

This month’s Catch of the Day: How Amazon Broke the Internet

Alright, it’s time for the main course! This is the "catch of the day" we all got to experience, whether we wanted to or not: the great AWS outage. Yes, this is the story of how Amazon broke the internet and why you couldn't use your Ring doorbell, send a Snapchat, or even play Wordle. It turns out "the cloud" isn't some magical sky-computer; it's mostly a bunch of servers in Northern Virginia, and one of them (the all-important US-EAST-1 region) finally got fried.

So what exactly did they overcook? The problem wasn't a cyberattack, but something much more mundane and terrifying: a DNS failure. Think of DNS as the Internet’s phone book. A faulty update to a key database (DynamoDB) essentially set that phone book on fire. Suddenly, apps couldn't find the numbers for the servers they needed to talk to.

This triggered a massive, cascading failure that took everything down with it. We’re talking Roblox, Fortnite, Canva, Coinbase, and even airlines. It was a stark reminder that the entire digital world is basically balanced on one or two plates, and this time, Amazon dropped the whole platter. It's the ultimate example of "concentration risk," and we all got to feel what happens when the central kitchen has a grease fire.

If you want a short and frosty breakdown of this internet-wide brain freeze, check out this video.

The After-Dinner Mint (That Tastes Like Malware)

First up, a tasty little morsel about that expensive gaming mouse you love. Its high-performance sensor is so good, it can pick up vibrations from your desk, allowing AI to listen to everything you say. Yes, your mouse is now a microphone. Delicious.

Next, a new hacker gang hit a "new low" by stealing 8,000 children's photos from a nursery for ransom. They quickly apologized and backpedaled after the public backlash, proving even criminals, it seems, are worried about their brand. How touching.

And for the final bite, a reminder that the future is here and it's terrifying. Law enforcement is sounding the alarm that they're being flooded with untraceable, 3D-printed "ghost guns" that can be made by anyone with a printer and a blueprint. Sweet dreams.